Beyond the Buzz: Crafting Ethical AI with Explainable AI (XAI)

AI is revolutionizing industries, but its 'black box' nature raises concerns. Explore the power of Explainable AI (XAI) to build trustworthy, ethical AI solutions that are transparent, accountable, and aligned with human values. Learn practical strategies for implementing XAI and unlocking the full potential of AI responsibly.

Artificial Intelligence (AI) is rapidly transforming the world around us, driving innovation across industries from healthcare to finance. However, the increasing complexity of AI models, particularly deep learning, has led to a critical challenge: the 'black box' problem. These models, while achieving impressive results, often lack transparency, making it difficult to understand why they make certain decisions. This lack of explainability raises significant ethical concerns and hinders the widespread adoption of AI in sensitive domains. That's where Explainable AI (XAI) comes in.

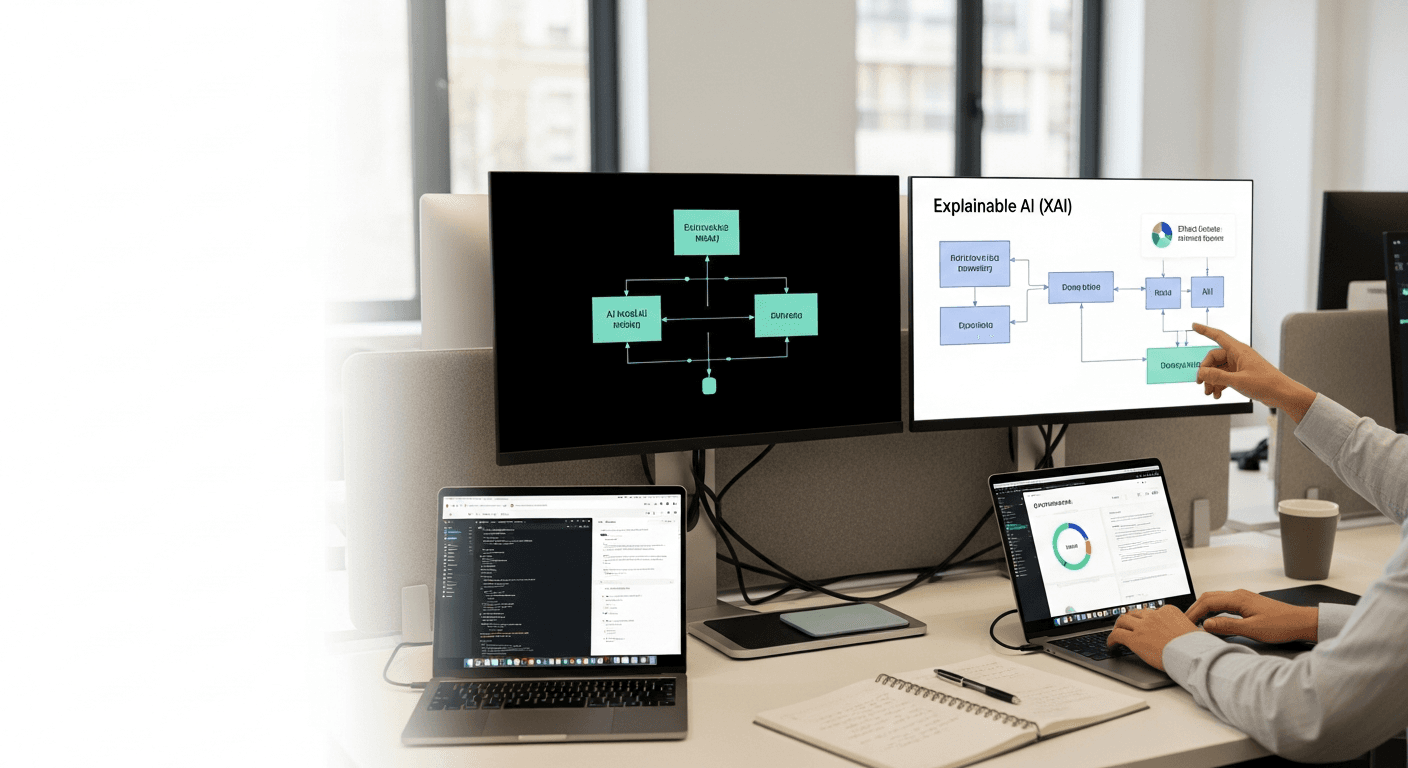

What is Explainable AI (XAI)?

XAI refers to a set of techniques and methods designed to make AI systems more understandable to humans. It aims to shed light on the inner workings of AI models, providing insights into how they arrive at their conclusions. Instead of simply accepting an AI's output as a given, XAI allows us to understand the factors that influenced the decision, the model's reasoning process, and its potential limitations.

Why is XAI Crucial for Ethical AI?

The move toward ethical AI is not just a trend; it’s a necessity. Here’s why:

* Transparency and Trust: In high-stakes scenarios like medical diagnosis or loan approvals, users need to understand the rationale behind AI's recommendations to trust and accept them. XAI provides this transparency, building confidence in AI systems. * Accountability and Fairness: When AI systems make biased or discriminatory decisions, XAI helps identify the root causes of these biases. This enables developers to mitigate unfairness and ensure accountability for AI's actions. * Regulatory Compliance: As AI becomes more prevalent, regulatory bodies are increasingly demanding transparency and explainability. XAI helps organizations comply with regulations like the GDPR, which requires explanations for automated decisions that significantly impact individuals. * Improved Model Performance: XAI can also help developers identify weaknesses and areas for improvement in their AI models. By understanding why a model makes certain errors, developers can refine its architecture, training data, or hyperparameters to enhance its overall performance.

Practical Strategies for Implementing XAI

Implementing XAI is not a one-size-fits-all approach. The specific techniques you choose will depend on the type of AI model you're using and the specific requirements of your application. However, here are some practical strategies to get you started:

1. Choose Explainable Models: Opt for inherently interpretable models whenever possible. Linear regression, decision trees, and rule-based systems are examples of models that are relatively easy to understand. While they may not achieve the same level of accuracy as complex deep learning models in some cases, their transparency can be a significant advantage.

2. Employ Post-Hoc Explainability Techniques: When using complex 'black box' models, apply post-hoc explainability techniques to understand their behavior after they have been trained. Some popular post-hoc methods include: * LIME (Local Interpretable Model-Agnostic Explanations): LIME explains the predictions of any classifier by approximating it locally with an interpretable model, such as a linear model. It highlights the features that contribute most to the prediction for a specific instance. * SHAP (SHapley Additive exPlanations): SHAP uses game theory to assign each feature a Shapley value, which represents its contribution to the prediction. SHAP provides a more comprehensive and consistent explanation than LIME. * Integrated Gradients: This technique calculates the integral of the gradients of the model's output with respect to the input features. It provides insights into how each feature contributes to the prediction by tracing the path from a baseline input to the actual input. * Attention Mechanisms: In neural networks, attention mechanisms can provide insights into which parts of the input the model is focusing on when making a prediction. This can be particularly useful for understanding the behavior of models used for natural language processing or image recognition.

3. Focus on Feature Importance: Identify the features that have the most significant impact on the model's predictions. This can be done using techniques like permutation importance or feature ablation. Understanding feature importance can help you simplify your model, improve its robustness, and gain insights into the underlying relationships in your data.

4. Visualize Model Behavior: Use visualizations to communicate the model's behavior in a clear and intuitive way. For example, you can use heatmaps to visualize the activation patterns of convolutional neural networks or decision trees to visualize the decision-making process of a classification model. Visualizations can make it easier for stakeholders to understand and trust the AI system.

5. Document and Communicate Explanations: It's not enough to simply generate explanations. You also need to document them and communicate them effectively to stakeholders. This includes providing clear and concise descriptions of the explanations, as well as visualizations and examples that illustrate the model's behavior. Transparent communication is essential for building trust and ensuring that AI systems are used responsibly.

Example: XAI in Loan Approval

Imagine an AI-powered system that automatically approves or rejects loan applications. Without XAI, a rejected applicant would simply receive a denial notice, leaving them in the dark about the reasons for the decision. With XAI, the system could provide a breakdown of the factors that contributed to the rejection, such as:

* Credit score: "Your credit score is below the minimum threshold for loan approval." * Debt-to-income ratio: "Your debt-to-income ratio is too high, indicating that you may have difficulty repaying the loan." * Employment history: "You have a limited employment history, which raises concerns about your ability to maintain a stable income."

By providing these explanations, the system empowers the applicant to understand the decision and take steps to improve their financial situation.

The Future of XAI

As AI continues to evolve, XAI will become increasingly important. Researchers are actively developing new and improved XAI techniques that can handle more complex models and provide more nuanced explanations. Furthermore, there is a growing recognition of the need for human-centered XAI, which focuses on designing explanations that are tailored to the specific needs and understanding of the user.

Conclusion: Building Trustworthy AI

Explainable AI is not just a technical challenge; it's an ethical imperative. By embracing XAI, we can build AI systems that are transparent, accountable, and aligned with human values. This will not only foster trust and adoption of AI but also ensure that AI is used responsibly to benefit society as a whole. As you embark on your AI journey, remember that explainability is not an afterthought; it's a fundamental principle that should be integrated into every stage of the development process. By prioritizing XAI, you can unlock the full potential of AI while mitigating its risks and building a more equitable and trustworthy future.

So, go beyond the buzz and start crafting ethical AI with Explainable AI today! The future of AI depends on it.